This is the full developer documentation for Joist ORM

# Avoiding N+1s

> Documentation for Avoiding N+1s

Joist is built on Facebook’s [dataloader](https://github.com/graphql/dataloader) library, which means Joist avoids N+1s in a fundamental, systematic way that just works.

This foundation comes from Joist’s roots as an ORM for GraphQL backends, which are particularly prone to N+1s (see below), but is a boon to any system (REST, GRPC, etc.).

## N+1s: Lazy Loading in a Loop

[Section titled “N+1s: Lazy Loading in a Loop”](#n1s-lazy-loading-in-a-loop)

As a short explanation, the term “N+1” is what *can* happen for code that looks like:

```typescript

// Get an author and their books

const author = await em.load(Author, "a:1");

const books = await author.books.load();

// Do something with each book

await Promise.all(books.map(async (book) => {

// Now I want each book's reviews... in Joist this does _not_ N+1

const reviews = await book.reviews.load();

}));

```

Without Joist (or a similar Dataloader-ish approach), the risk is each `book.reviews.load()` causes its own `SELECT * FROM book_reviews WHERE book_id = ...`. I.e.:

* the 1st loop calls `SELECT * FROM reviews WHERE book_id = 1`,

* the 2nd loop calls `SELECT * FROM reviews WHERE book_id = 2`,

* etc.,

Such that if we have `N` books, we will make `N` SQL queries, one for each book id.

If we count the initial `SELECT * FROM books WHERE author_id = 1` query as `1`, this means we’ve made `N + 1` queries to process each of the author’s books, hence the term N+1.

However, with Joist the above code will issue only **three queries**:

```sql

-- first em.load

SELECT * FROM authors WHERE id = 1;

-- author.books.load()

SELECT * FROM books WHERE author_id = 1;

-- All of the book.reviews.load() combined into 1 query

SELECT * FROM book_reviews WHERE book_id IN (1, 2, 3, ...);

```

This N+1 prevention works not only in our 3-line `await Promise.all` example, but also works in complex codepaths where the business logic of “process each book” (and any lazy loading it might trigger) is spread out across helper methods, validation rules, entity lifecycle hooks, etc.

Tip

In one of Joist’s alpha features, join-based preloading, these 3 queries can actually be collapsed into a single SQL call, although achieving this does require an up-front populate hint.

## Type-Safe Preloading

[Section titled “Type-Safe Preloading”](#type-safe-preloading)

While the 1st snippet shows that Joist avoids N+1s in `async` / `Promise.all`-heavy code, Joist also supports populate hints, which not only **preload the data** but also **change the types to allow non-async access**.

With Joist, the above code can be rewritten as:

```typescript

// Get an author and their books _and_ the books' reviews

const author = await em.load(Author, "a:1", { books: "reviews" });

// Do something with each book _and_ its reviews ... with no awaits!

author.books.get.map((book) => {

// The `.get` method is available here only b/c we passed "reviews" to em.load

const reviews = book.reviews.get;

});

```

And it has exactly the same runtime semantics (i.e. number of SQL calls) as the previous `async/await`-based code: the **same three queries** are issued for both “with populate hints” and “without populate hints” code.

See [Load-Safe Relations](./load-safe-relations.md) for more information about this feature, however we point it out here because while populate hints are great for writing non-async code & avoiding N+1s (other ORMs like ActiveRecord use them), in Joist populate hints are **supported but *not required*** to avoid N+1s.

This is key, because in a sufficiently large/complex codebase, it can be **extremely hard to know ahead of time** exactly the right populate hint(s) that an endpoint should use to preload its data in an N+1 safe manner.

With Joist, you don’t have to worry anymore: if you use populate hints, that’s great, you won’t have N+1s. But if you end up with business logic (helper methods, validation rules, etc.) being called in an `async` loop, **it will still be fine**, and not N+1, because in Joist both populate hints & “old-school” `async/await` access are built on top of the same Dataloader-based, N+1-safe core.

## Longer Background

[Section titled “Longer Background”](#longer-background)

### Common/Tedious Pitfall

[Section titled “Common/Tedious Pitfall”](#commontedious-pitfall)

N+1s have plagued ORMs, in many programming languages, because the de facto ORM approach of “relations are just methods on an object” (i.e. `author1.getBooks()` or `book1.getAuthor()` will lazy-load the requested data from the database) causes a **leaky abstraction**—normally method calls are super-cheap in-memory accesses, but ORM methods that make expensive I/O calls are fundamentally not “super-cheap”.

These methods that implicitly issue I/O calls are powerful and very ergonomic, however they are almost **too ergonomic**: it’s very natural for programmers to, given a list of objects, loop over those objects and access their methods, and unwittingly cause an N+1.

For example, in Rails ActiveRecord, N+1s happen by default, and the programmer needs to tell ActiveRecord ahead of time which collections to preload:

```ruby

author = Author.find_by_id("1");

# The `include(:reviews)` means reviews are fetched before the `for` loop

books = Book.find({ author_id: author.id }).include(:reviews)

books.each do |book|

# Now access the collection, and it's already in-memory.

# Without `include(:reviews)` this would still work but _silently N+1_

reviews = book.reviews.length;

end

```

This `include(:reviews)` resolves the performance issue, but relies on the programmer knowing what data will be accessed in loops ahead of time. This is possible, but as a codebase grows it becomes a tedious game of whack-a-mole, as the default behavior is inherently unsafe.

### Saved By the Event Loop

[Section titled “Saved By the Event Loop”](#saved-by-the-event-loop)

Joist is able to avoid N+1s **without preload hints** by leveraging Facebook’s [dataloader](https://github.com/graphql/dataloader) library to automatically batch multiple `load` operations into single SQL statements.

Dataloader leverages JavaScript’s synchronous/single-thread model, which is where JavaScript evaluates the `book.reviews.load()` method inside of `books.map`:

```typescript

await Promise.all(books.map(async (book) => {

const reviews = await book.reviews.load();

}));

```

The `book.reviews.load` method, when invoked, is fundamentally not allowed to make an immediate SQL call, because it would block the event loop.

Instead, the `load` method is forced to return a `Promise`, handle the I/O off the thread, and then later return the `reviews` that have been loaded.

And so the *actual* “immediate next thing” that this code does is not “make a SQL call for book1’s reviews”, but instead is the next iteration of `books.map`, i.e. get `book 2` and asks for its `book.reviews.load()` as well.

Ironically, this forced “nothing can block” model, that for years was the bane of JavaScript due to the pre-`Promise` callback hell it caused, gives Joist (via dataloader) an opportunity to wait just a *little bit*, until all of the `book.reviews.load()` have been “asked for”, and the `books.map` iteration is finished, to only then see that “ah, we’ve been asked to do 10 `book.reviews.load`, let’s do those as a single SQL statement”, and execute a single SQL statement like:

```sql

SELECT * FROM book_reviews WHERE book_id IN (1, 2, 3, ..., 10);

```

### Control Flow

[Section titled “Control Flow”](#control-flow)

It is a little esoteric, but dataloader implements this by automatically managing “flush” events in JavaScript’s event loop. Specifically, the event loop execution will look like (each “Tick” is a synchronous execution of logic on the event loop):

* Tick 1, call `books.map` for each book, and synchronously

* For book 1, call `load`, there is no existing “flush” event, so dataloader creates one at the end of the queue (i.e. to be invoked at the next tick), with `book:1` in it

* For book 2, call `load`, see there is already a queued “flush” event, so add `book:2` to it,

* For book `N`, call `load`, see there is already a queued “flush” event, so add `book:N` to it

* Tick 2, evaluate the “flush” event, with it’s 10 book ids kept in an array

* Tell Joist “load all 10 books”

* Joist issues a single SQL statement

* Tick 3, SQL statement resolves, Joist tells dataloader “okay, here are the reviews for each of the 10 books”, when the dataloader:

* Resolves book 1’s promise with its respective reviews

* Resolves book 2’s promise with its respective reviews

* Resolves book `N`’s promise with its respective reviews

* Tick 4, continue book 1’s `async` function, now with `reviews` populated

* Tick 5, continue book 2’s `async` function, now with `reviews` populated

* …

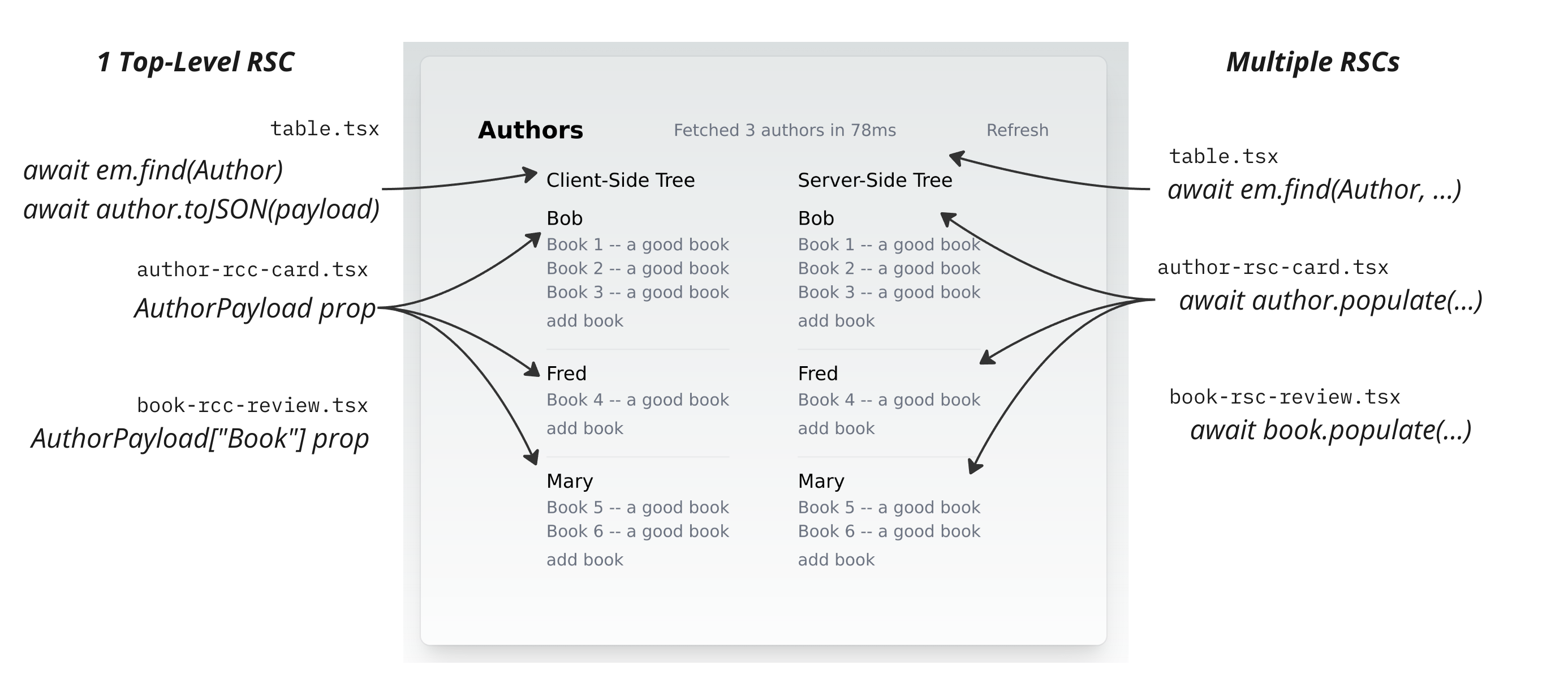

## N+1-Safe GraphQL Resolvers

[Section titled “N+1-Safe GraphQL Resolvers”](#n1-safe-graphql-resolvers)

Joist’s auto-batching works for any `em.load` calls (or lazy-load calls `author.books.load()`, etc.) that happen synchronously within a tick of the event loop.

This means that auto-batching works for either simple/obvious cases like calling `book.reviews.load()` in `books.map(book => ...)` lambda, or **disparately across separate methods** that are still invoked (essentially) simultaneously, which is exactly what happens with GraphQL resolvers.

For example, let’s say a GraphQL client has issued a query like:

```graphql

query {

authors(id: 1) {

books {

reviews {

id

name

}

}

}

}

```

We might implement our `books.reviews` resolver like:

```typescript

const booksResolver = {

async reviews(bookId, args, ctx) {

const book = await ctx.em.load(Book, bookId);

return await book.reviews.load();

}

}

```

And, the way the GraphQL resolver pattern works, the GraphQL runtime will call the `booksResolver.reviews(1)`, `booksResolver.reviews(2)`, `booksResolver.reviews(3)`, etc. method for each of the books returning from our query.

This looks like it could be an N+1, however because each of the `reviews(1)`, `reviews(2)`, etc. calls has happened within a single tick of the event loop, the dataloader “flush” event will automatically kick-in and ask Joist to look all of the reviews as a single SQL call.

Tip

Joist is GraphQL agnostic; you can use a different API layer, like REST or GRPC, we are just using GraphQL as an example due to its N+1 prone nature.

## How It Works

[Section titled “How It Works”](#how-it-works)

There are two primary components to Joist’s batching:

1. Graph navigation, and

2. `em.find` queries

### Graph Navigation

[Section titled “Graph Navigation”](#graph-navigation)

To avoid N+1s during graph navigation (using methods `author.books.load` or `book.author.load` to lazy load data), Joist maintains a dataloader per relation/per edge. For example if you do:

* `await author1.books.load()`

* `await author2.books.load()`

* `await author3.books.load()`

In a loop, the `Author.books` o2m relation has a dataloader that collects `author1`, `author2`, and `author3` entities in a list and then issues a SQL single statement for books with `WHERE author_id IN (1, 2, 3)`.

Joist has dataloader implementations for all the core relations involved in graph navigation: o2m, m2o, o2o, and m2m. Their implementations are straightforward and generally rock solid.

### Find Queries

[Section titled “Find Queries”](#find-queries)

Besides graph navigation, Joist will also auto-batch `em.find` queries, which are more adhoc `SELECT` queries (see [Find Queries](../features/queries-find.md)). For example if you do:

* `await em.find(Author, { firstName: "a1", lastName: "l1" })`

* `await em.find(Author, { firstName: "a2", lastName: "l2" })`

* `await em.find(Author, { firstName: "a3", lastName: "l3" })`

In a loop, then `em.find` will batch any `SELECT` statements that have the same joins and same filtering (essentially the same query structure) in a single statement that looks like:

```sql

WITH _find (tag, arg1) AS (VALUES

(1, 'a1', 'l1'),

(2, 'a2', 'l2'),

(3, 'a3', 'l3')

)

SELECT * FROM authors a

JOIN _find ON (a.first_name = _find.arg1 AND a.last_name = _find.arg2);

```

This approach leverages the Common Table Expression (CTE) of inline values and extra `JOIN` clause to essentially apply multiple `WHERE` clauses at once. This is admittedly more esoteric than Joist’s graph navigation dataloaders, but it achieves the goal of de-N+1-ing the queries.

Note

Joist’s `em.find` does not support `limit` or `offset` because they cannot be applied with the `JOIN` filtering approach. Instead, for `limit` and `offset` you can use `em.findPaginated`, although note that `findPaginated` will not auto-batch, so you should avoid calling it in a loop.

# Code Generation

> Documentation for Code Generation

One of the primary ways Joist achieves ActiveRecord-level productivity is by generating the boilerplate part of domain models from the database schema.

## Beautiful Domain Models

[Section titled “Beautiful Domain Models”](#beautiful-domain-models)

To see this in action, for an `authors` table, in Joist the initial `Author.ts` domain model is as clean & simple as:

```typescript

import { AuthorCodegen } from "./entities";

export class Author extends AuthorCodegen {}

```

And that’s it.

This is very similar to Rails ActiveRecord, where Joist automatically adds all the columns to the `Author` class for free, without having to re-type them in your domain object.

It does this for:

* Primitive columns, i.e. `first_name` can be set via `author.firstName = "bob"`

* Foreign key columns, i.e. `book.author_id` can be set via `book.author.set(...)`, and

* Foreign key collections, i.e. `Author.books` can be loaded via `await author.books.load()`.

* One-to-one relations, many-to-many collections, etc.

These columns/fields are added to the `AuthorCodegen.ts` file, which looks (redacted for clarity) something like:

```typescript

// This is all generated code

export abstract class AuthorCodegen extends BaseEntity {

readonly books = hasMany(bookMeta, "books", "author", "author_id");

readonly publisher = hasOne(publisherMeta, "publisher", "authors");

// ...

get id(): AuthorId | undefined { ... }

get firstName(): string { ... }

set firstName(firstName: string) { ...}

}

```

Tip

Note that, while ActiveRecord leverages Ruby’s runtime meta-programming to add getter & setters when your program starts up, Joist does this via build-time code generation (i.e. by running a `npm run joist-codegen` command).

This approach allows the generated types to be seen by the TypeScript compiler and IDEs, and so provides your codebase a type-safe view of your database.

## What is Generated?

[Section titled “What is Generated?”](#what-is-generated)

When running `npm run joist-codegen`, Joist will examine the database schema and generate:

* For each entity table (e.g. `authors`), an entity “codegen” file (`AuthorCodegen.ts`)

This file is written out **every time** and contains the boilerplate code that can be deterministically inferred from the database schema, from example:

* Fields for all primitive columns

* Fields for all relations (references like `Book.author` and collections like `Author.books`)

* Basic auto-generated validation rules (e.g. from not null constraints)

* For each entity table, an entity “working” file (`Author.ts`)

This file is written out **only once** and is where custom business logic and validation rules can go, without it being over-written by the next time `joist-codegen` runs.

* For each entity table, a factory file (`newAuthor.ts`)

This file provides tests with a succinct “one-liner” way to get a valid entity.

* A `metadata.ts` file with schema information.

## Evergreen Code Generation

[Section titled “Evergreen Code Generation”](#evergreen-code-generation)

Joist’s code generation runs continually (although currently invoked by hand, i.e. individual `npm run joist-codegen` commands), after every migration/schema change, so your domain objects will always 1-to-1 match your schema, without having to worry about keeping the two in sync.

### Custom Business Logic

[Section titled “Custom Business Logic”](#custom-business-logic)

Even though Joist’s code generation runs continually, it only touches the `Author.ts` once.

After that, all of Joist’s updates are made only to the separate `AuthorCodegen.ts` file.

This makes `Author.ts` a safe space to add any custom business logic you might need, separate from the boilerplate of the various getters, setters, and relations that are isolated into “codegen” base class, and always overwritten.

See [Lifecycle Hooks](../modeling/lifecycle-hooks.md) and [Reactive Fields](../modeling/reactive-fields.md) for examples of how to add business logic.

### Declarative Customizations (TODO)

[Section titled “Declarative Customizations (TODO)”](#declarative-customizations-todo)

If you do need to customize how a column is mapped, Joist *should* (these are not implemented yet) have two levers to pull:

1. Declare a schema-wide rule based on the column’s type and/or naming convention

In the `joist-config.json` config file, define all `timestampz` columns should be mapped as type `MyCustomDateTime`.

This would be preferable to per-column configuration/annotations because you could declare the rule once, and have it apply to all applicable columns in your schema.

2. Declare a specific user type for a column.

In the `joist-config.json` config file, define the column’s specific user type.

## Pros/Cons

[Section titled “Pros/Cons”](#proscons)

This approach (continual, verbatim mapping of the database schema to your object model) generally assumes you have a modern/pleasant schema to work with, and you don’t need your object model to look dramatically different from your database tables.

Joist’s assertion is that this strict 1-1 mapping is a feature, because it should largely help avoid the [horror stories of ORMs](https://blog.codinghorror.com/object-relational-mapping-is-the-vietnam-of-computer-science/), where the ORM is asked to do non-trivial translation between a database schema and object model that are fundamentally at odds.

## Why Schema First?

[Section titled “Why Schema First?”](#why-schema-first)

Joist’s approach is “schema first”, i.e. we first declare the database schema, and then generate the domain model from the database schema.

Along with “schema-first”, there generally three approaches to domain model/database mapping:

1. Schema-first (generate code from the schema database, like Joist)

2. Code-first (generate the schema from the code, i.e. from `@Column` and `@ManyToOne` annotations in the domain model)

3. No automatic generation either way, just map the two by hand

Joist’s assertion is that schema-first is the most pragmatic, b/c the database really is the “source of truth” for the data, and that code-first schema-generation does not scale once you have to production data that needs to be migrated that can sometimes, but not *always*, be migrated automatically.

(That said, code-first schema generates have gotten a lot more robust, so if you want to use a “model-first” schema management / migration library, that’s fine; you could define your model in that, use it to apply/manage your database schema, and then generate your Joist domain model from the database schema.)

# Great Tests

> Documentation for Great Tests

Joist focuses not just on great production code & business logic, but also on enabling great test coverage of your business logic, by facilitating tests that are:

1. Isolated,

2. Succinct, and

3. Fast

## Isolated Tests

[Section titled “Isolated Tests”](#isolated-tests)

Isolation is an important tenant of great tests, because any sort of “shared fixtures” or “shared environments” that couple automated tests to an ever-growing, ever-changing shared test data set eventually becomes very confusing to debug and very brittle to change.

With Joist, each unit test starts out with a clean database, and so is concerned only with the minimum amount of data it needs for its boundary case.

I.e. when you run:

```typescript

describe("Author", () => {

it("can have rule one", async () => {

const em = newEntityManager();

const a1 = em.create(Author, { firstName: "a1" });

await em.flush();

});

it("can have rule two", async () => {

const em = newEntityManager();

const a1 = em.create(Author, { firstName: "a1" });

await em.flush();

});

});

```

Each `it` block will see a clean/fresh database.

This is achieved by running a `flush_database` stored procedure in `beforeEach`:

```typescript

beforeEach(async () => {

await knex.select(knex.raw("flush_database()"));

});

```

Where the `flush_database` stored procured:

1. Is a single database invocation, so cheap to invoke

2. Knows the difference between entity tables and enum tables, and only `TRUNCATE`s entity tables

3. Resets sequences to restart from 1

4. Is only created in local testing environments, not production

Info

The `flush_database` stored procedure is created while running `npm run joist-codegen`, both because its body is generated based on your current schema (similar to the other `joist-codegen` output), and also because `joist-codegen` is generally only ran against a local development environment, which avoids having this stored procedure ever exist in production.

## Succinct Tests

[Section titled “Succinct Tests”](#succinct-tests)

Given each test starts with a clean database, Joist provides factories to easily create test data, so that the benefit of “a clean database” is not negated by lots of boilerplate code to re-create test data.

Factories can:

1. Accept values that are important to the test case being tested,

2. Fill in defaults for any other required fields/columns,

3. Also accept specific hints/flags to create re-usable “chunks” of data

For example, if you want to test an author with a book of the same name/title:

```typescript

const a1 = newAuthor({

firstName: "a1",

books: [{ title: "a1" }],

});

```

If either the `Author` or `Book` had other required fields, the `newAuthor` and `newBook` factories will apply them as needed.

See the [factories](../testing/test-factories.md) for more information on custom flags.

## Fast Tests

[Section titled “Fast Tests”](#fast-tests)

Slow tests can kill productivity and dis-incentivize testing in general, so Joist tries to make tests as fast as possible.

Joist does not have a specific approach/feature that enables fast tests, other than:

* The `flush_database` stored procedure makes db resets a single database call instead of `N` calls (i.e. 1 `DELETE` per table in your schema)

* Joist’s use of build-time code generation means it does not need to scan the schema at runtime/boot time.

In small projects, you can generally expect:

* A single file takes \~1 second to run (Jest will report \~100ms, but real time is higher)

* Individual `it` test cases take \~10ms to run

In larger projects (i.e. 100-150 tables), you can expect:

* A single file takes \~5 seconds to run (Jest will report \~1.5 seconds, but real time is higher)

* Individual `it` test cases take \~50ms to run

Tip

Note that the “5 seconds of wall clock time” for large projects, and in general the discrepancy between Jest time vs. actual wall clock time, can be mitigated by projects like `@swc/jest` and `@swc-node/register`, as in larger projects the bottleneck becomes Node `require`/`import`-ing source code and transpiling the TypeScript to JavaScript, instead of Joist / the database operations themselves.

Tip

When running Postgres locally for testing, you can run `postgres -c fsync=off` (i.e. passed as the `command` in your `docker-compose.yml` file) to put Postgres into a “sort of” in-memory mode, that is faster because transactions will not commit to disk before completing.

#### What is “Fast Enough?”

[Section titled “What is “Fast Enough?””](#what-is-fast-enough)

Granted, compared to true in-memory unit tests, these tests times are still \~5-10x slower, but the goal is that they are still “fast enough” given the benefit of still using the real database.

Sometimes applications will choose to mock out all database calls, with the goal of having strictly zero I/O calls during unit tests; granted, sometimes this approach can make sense, i.e. a frontend codebase mocking all GraphQL calls makes sense. But, for testing domain entities that are fundamentally tied to the database schema & persistence layer, it’s generally more pragmatic with Joist to just keep testing against the real database.

Info

Joist has explored an [InMemoryDriver](https://github.com/joist-orm/joist-orm/blob/main/packages/orm/src/drivers/InMemoryDriver.ts), that could potentially achieve “no I/O calls during unit tests”, with the idea that building this complexity into Joist itself might justify/amortize its expense, instead of complicating each application’s architecture.

However, so far the `InMemoryDriver` is not actually 10x faster than real Postgres tests (it’s maybe \~2-3x), and also does not support custom SQL queries, so for now its development is on pause. Rebooting it on top of [pg-mem](https://github.com/oguimbal/pg-mem) might be fun, to get custom SQL query support.

# Load-Safe Relations

> Documentation for Load-Safe Relations

Joist models all relations as async-by-default, i.e. you must access them via `await` calls:

```ts

const author = await em.load(Author, "a:1");

// Returns the publisher if already fetched, otherwise makes a (N+1 safe) SQL call

const publisher = await author.publisher.load();

// Now the comments...

const publisherComments = await publisher.comments.load();

// Now the books...

const books = await author.books.load();

```

We call this “load safe”, because the type system prevents you from accidentally accessing unloaded data, i.e. invoking `publisher.comments.length` before the `comments` are loaded, which in ORMs like TypeORM result in annoyingly-frequent runtime errors.

Joist’s “async by default” / “load safe” approach solves this, but then to improve ergonomics and avoid tedious `await` or `Promise.all` calls, Joist also supports marking relations as explicitly loaded, to enable synchronous `.get`, non-`await`-d access:

```ts

// Preload publisher, it's comments, and books

const author = await em.load(Author, "a:1", { publisher: "comments", books: {} });

// Now these can all be syncronous--no awaits!

const publisher = author.publisher.get;

const publisherComments = publisher.comments.get;

const books = author.books.get;

```

## Background

[Section titled “Background”](#background)

One of the main DX affordances of ORMs is that relationships (relations) between tables in the database (i.e. foreign keys) are modelled as references & collections on the classes/entities in the domain model.

For example, in most ORMs a `books.author_id` foreign key column means the `Author` entity will have an `author.books` collection (which loads all books for that author), and the `Book` entity will have a `book.author` reference (which loads the book’s author).

In all ORMs, these references & collections are inherently lazy: because you don’t have your entire relational database in memory, objects start out with just a single/few rows loaded (i.e. a single `authors` row with `id=1` loaded as an `Author#1` instance) and then lazily loaded the data you need from there (i.e. you “walk the object graph” from that `Author#1` to the related data you need).

## Async By Default

[Section titled “Async By Default”](#async-by-default)

Because of the inherently lazy nature of references & collections, Joist takes the strong, type-safe opinion that if they *might* be unloaded, then they *must* be marked as `async/await`.

For example, you have to access `author.books` via an `await`-d promise:

```typescript

const author = await em.load(Author, "a:1");

const books = await author.books.load();

```

And you must do this each time, even if technically in the code path that you’re in, you “know” that `books` has already been loaded, i.e.:

```typescript

const author = await em.load(Author, "a:1");

// Call another method that happens to loads books

someComplicatedLogicThatLoadsBooks(author);

// You still can't do `books.get`, even though "we know" (but the compiler

// does not know) that the collection is technically already cached in-memory

const books = await author.books.load();

```

## But Async is Kinda Annoying

[Section titled “But Async is Kinda Annoying”](#but-async-is-kinda-annoying)

While Joist’s “async by default” approach is the safest, it is admittedly tedious when you get to double/triple levels of `await`s, i.e. to go from an `Author` to their `Book`s to each `Book`’s `BookReview`s:

```typescript

const author = await em.load(Author, "a:1");

await Promise.all((await author.books.load()).map(async (book) => {

// For each book load the reviews

return Promise.all((await book.reviews.load()).map(async (review) => {

console.log(review.name);

}));

}));

```

Yuck.

Given this complication, some ORMs in the JavaScript/TypeScript space sometimes fudge the “collections must be async” approach, and allow you to model collections as *synchronous*, i.e. you’re allowed to do:

```typescript

const author = await em.load(Author, "a:1");

// I promise I loaded books

await author.books.load();

// Now access it w/o promises

author.books.get.length;

```

Which is nice! But the wrinkle is that we’re now trusting ourselves to only access `books` *after* an explicit `load`, and if we forget, i.e. when our code paths end up being complex enough that it’s hard to tell, then we’ll get a runtime error that `books.get` is not allowed to be called

Because of this lack of safety, Joist avoids this approach, and instead has something fancier.

## The Magic Escape Hatch

[Section titled “The Magic Escape Hatch”](#the-magic-escape-hatch)

Ideally what we want is to have relations lazy-by-default, except when we’ve explicitly told TypeScript that we’ve loaded them. This is what Joist does.

In Joist, populate hints (which tell the ORM to pre-fetch data before it’s actually accessed) also *change the type of the entity*, and mark relations that were explicitly listed in the hint as loaded.

This looks like:

```typescript

const book = await em.populate(

originalBook,

// Tell Joist we want `{ author: "publisher" } preloaded

{ author: "publisher" });

// The `populate` return type is now "special"/MarkLoaded `Book`

// that has `author` and `publisher` marked as "get"-able

expect(book.author.get.firstName).toEqual("a1");

expect(book.author.get.publisher.get.name).toEqual("p1");

```

Note that `originalBook`’s `originalBook.author` reference does *not* have `.get` available (just the safe `.load` which returns a `Promise`); only the modified `Book` type returned from `em.populate` has the `.get` method added `author.book`.

Tip

You can avoid having two `originalBook` / `book` variables by passing populate hints directly to `EntityManager.load`, which will then return the appropriate `.get`-able references:

```typescript

const book = await em.load(

Book,

"a:1",

{ author: "publisher" });

expect(book.author.get.firstName).toEqual("a1");

expect(book.author.get.publisher.get.name).toEqual("p1");

```

Joist’s `populate` approach also works for multiple levels, i.e. our triple-nested `Promise.all`-hell example can be written with a single `await`

```typescript

const author = await em.load(

Author,

"a:1",

{ books: "reviews" },

);

author.books.get.forEach((book) => {

book.reviews.get.forEach((review) => {

console.log(review.name);

});

})

```

## Load Hints as Backend Fragments

[Section titled “Load Hints as Backend Fragments”](#load-hints-as-backend-fragments)

Joist’s load hints can provide “GraphQL fragment like” encapsulation for helper methods that are invoked in one place, but have their data loaded in another.

For example, let’s define a helper method that generates `Book` overviews, from a subgraph (fragment) of data:

```ts

function generateOverview(

// define the subgraph of data we need

book: Loaded

): string {

const { author } = withLoaded(book);

// Whatever the business logic is..., note we're allowed to synchronously

// access anything in our Loaded subgraph

return [

book.title,

author.firstName,

author.publisher.get.name,

].join(",")

}

```

However, often times we end up having to “load the book data” far away from when `generateOverview` is actually invoked, like:

```ts

const books = await em.find(

Book,

{ ...someConditions... },

// remember to make the load hint here match `generateOverview`

{ populate: { author: "publisher" } },

);

// ...

// a lot of code/business logic...

// ...

for (const book of books) {

// Finally we call generateOverview

generateOverview(book);

}

```

Note that Joist’s type-safety will make sure the `generateOverview` call fails to type-check 💪, if the `em.find`’s `populate` type-hint drifts/does not overlap with the type declared by `generateOverview`.

Which is great, but in larger/more complex scenarios it can be tedious to keep these two in sync—the `generateOverview`’s type, and the `populate` load hint; when this happens, we can lean into TypeScript:

```ts

// Declare a const of the load type

const overviewHint = { author: "publisher" } satisifies LoadHint;

// And a type that uses the load hint, basically our fragment type

const OverviewBook = Loaded;

// now generateOverview uses the type

function generateOverview(book: OverviewBook): string {

// We can still access book.author/book.author.publisher synchronously

return "...business logic...";

}

// And we reference the const in find our:

const books = await em.find(Book, {}, { populate: overviewHint });

```

This dries up our `em.find` call, and makes it much more declarative about who/why we’re populating this data. And it helps `populate` hints from accumulating cruft over time, where their data was initially used, but now no longer necessary in the actual codepaths.

And because these `const`s and `type`s are “just regular TypeScript”, we can compose them, i.e.:

```ts

// Defined by `BookView`

const bookViewHint = "author" satisfies LoadHint;

// Defined by `AuthorView` that wants to also call `BookView`

// but also render its own per-book data

const authorHint = {

firstName: {},

books: { ...bookViewHint, "title": {} },

} satisifes LoadHint;

type LoadedAuthor = LoadHint;

```

Granted, you might have to deep merge sufficiently-complicated type hints—we don’t yet have a utility method to do that, but probably should!

## Best of Both Worlds

[Section titled “Best of Both Worlds”](#best-of-both-worlds)

This combination of “async by default” and “populate hint mapped types” brings the best of both worlds:

* Data that we are unsure of its loaded-ness, must be `await`-d, while

* Data that we (and, more importantly, the TypeScript compiler) are sure of its loaded-ness, can be accessed synchronously

# No Ugly Queries

> Documentation for No Ugly Queries

Historically, ORMs have a reputation for creating “ugly queries”, particularly when the ORM’s query API adds too much abstraction on top of raw SQL, and what “looks simple” in the query API is actually a big, gnarly SQL string that no programmer would ever write by hand.

These ugly queries can cause multiple issues:

* Performance issues b/c of their arcane output can’t be optimized by the database,

* Logic issues (bugs) b/c the generated SQL that doesn’t actually do what the programmer meant (leaky abstractions), and

* Just look weird in general.

And have caused a backlash of programmers who insist on writing every SQL query by hand.

Joist asserts this is a **false dichotomy**; we shouldn’t have to choose between:

* “Handwriting every line of SQL in our app”, and

* “The ORM generates ugly queries”

How does Joist solve this? By not trying so hard.

## Use mostly Joist, with some custom

[Section titled “Use mostly Joist, with some custom”](#use-mostly-joist-with-some-custom)

Joist “solves” ugly queries by just never even attempting them: it’s a non-goal for Joist to own “every SQL query in your app”.

Granted, we think Joist’s graph navigation & `em.find` APIs are powerful, ergonomic, and should be **the large majority** of SQL queries in your app: “get this author’s books”, “load the books & reviews & their ratings”, “load the Nth page of authors with the given filters”, etc.

However, we’ve limited them to **only features that can be implemented with “obviously boring SQL”**.

Instead, for any of your queries that are truly custom, and doing “hard, complicated things”, it’s perfectly fine to use a separate, lower-level query builder, or even raw SQL strings, to issue complicated queries.

These lower-level APIs put you in full-control of the SQL, at the cost of more verbosity and complexity—but sometimes that is the right tradeoff!

Tip

In one production Joist codebase, approximately 95% of the SQL queries were Joist-created graph navigation & `em.find` queries, and 5% were handwritten custom Knex queries.

This ratio will vary between codebases, but we feel confident it will be over 80%, and that the succinctness of using Joist for these 80-95% cases (with their guarantee to be “not ugly”), is a big productivity win.

## What we don’t support

[Section titled “What we don’t support”](#what-we-dont-support)

Specifically, today Joist does not support:

* Common Table Expressions

* Group bys, aggregates, sums, havings

* Loading/processing any query results that aren’t entities

* Probably much more

Granted, we don’t want to undersell our `em.find` API (it is great), but nor have we set out to “build a DSL to create every SQL query ever”.

That is just not Joist’s strength—our strength is ergonomically representing complicated business domains, and enforcing complicated business constraints, and that is a hard enough problem as it is. :-)

Instead, we encourage you to use lower-level libraries like Knex for your app’s custom queries.

Info

Obviously having multiple full-fledged libraries, i.e. Joist for the domain model and Kysley for low-level queries, is not a great solution, and probably overkill.

Personally, we use Knex for our low-level custom queries (those 5%), because it’s lightweight and sufficiently ergonomic.

Joist may eventually provide a “raw SQL” query builder, that is Knex-ish, but it will be a completely separate API from `em.find`, to avoid any slippery slopes to `em.find` becoming a leaky abstraction and creating “ugly queries”.

# Overview

> Documentation for Goals

Joist’s mission is to help you build great domain models.

The original inspiration was to bring [ActiveRecord](https://guides.rubyonrails.org/active_record_basics.html)-level productivity to TypeScript projects, but with bullet-proof N+1 prevention, and bringing reactivity to the backend, we have arguably already surpassed that goal.

Joist’s primary features are:

* [Code Generation](./code-generation.md) to move fast and remove boilerplate

* [Bullet-Proof N+1 Prevention](./avoiding-n-plus-1s.md) through first-class [dataloader](https://github.com/graphql/dataloader) integration

* Type-safe tracking of [Loaded vs. Unloaded Relations](./load-safe-relations.md)

* Bringing [Reactivity to the Backend](../modeling/reactive-fields.md)

* Robust [Domain Modeling](../modeling/fields.md)

* [Great testing](./great-tests.md) with built-in factories and other support

* A promise of [No Ugly Queries](/goals/no-ugly-queries)

# Performance

> Joist's performance philosophy

Joist has a nuanced stance on performance: we assert Joist-written code will issue *less queries* and *more efficient* queries than the “default” day-to-day code written by most engineers.

This is different from saying Joist will “always be the fastest way to perform any every database query”—it won’t! There are times when carefully-crafted, handwritten SQL queries are best.

But for most day-to-day code, Joist performs optimizations that most engineers won’t bother doing:

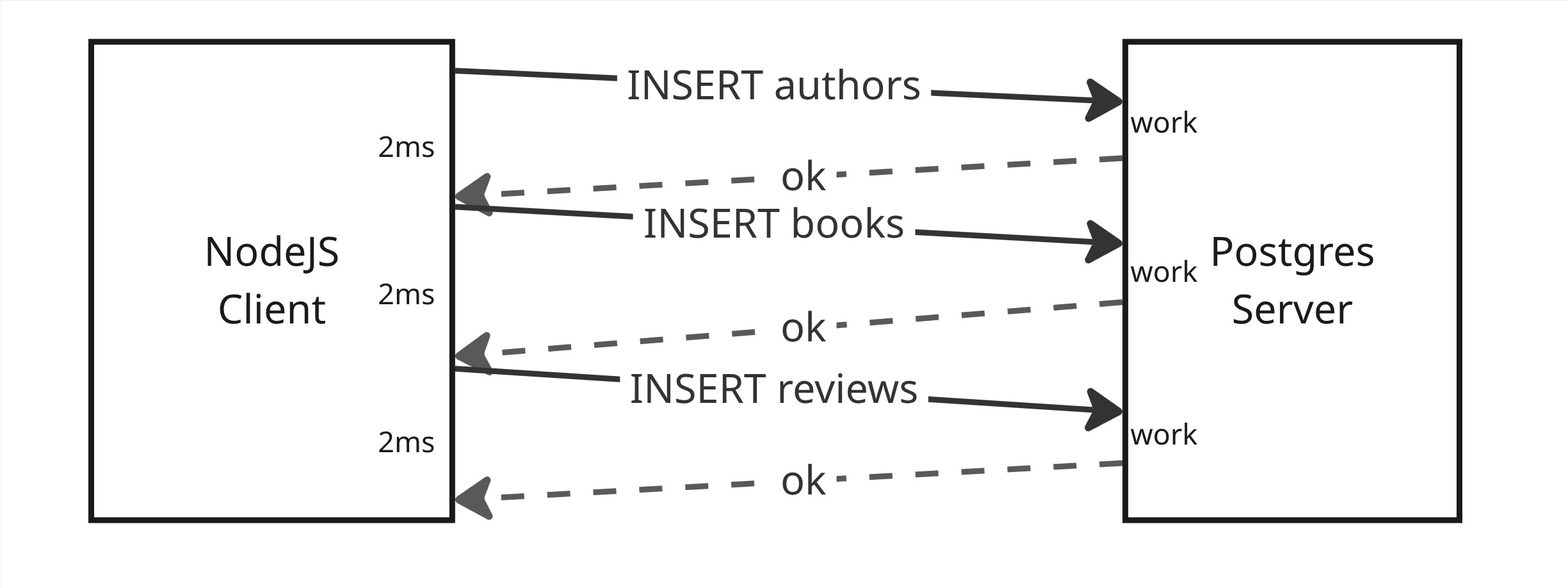

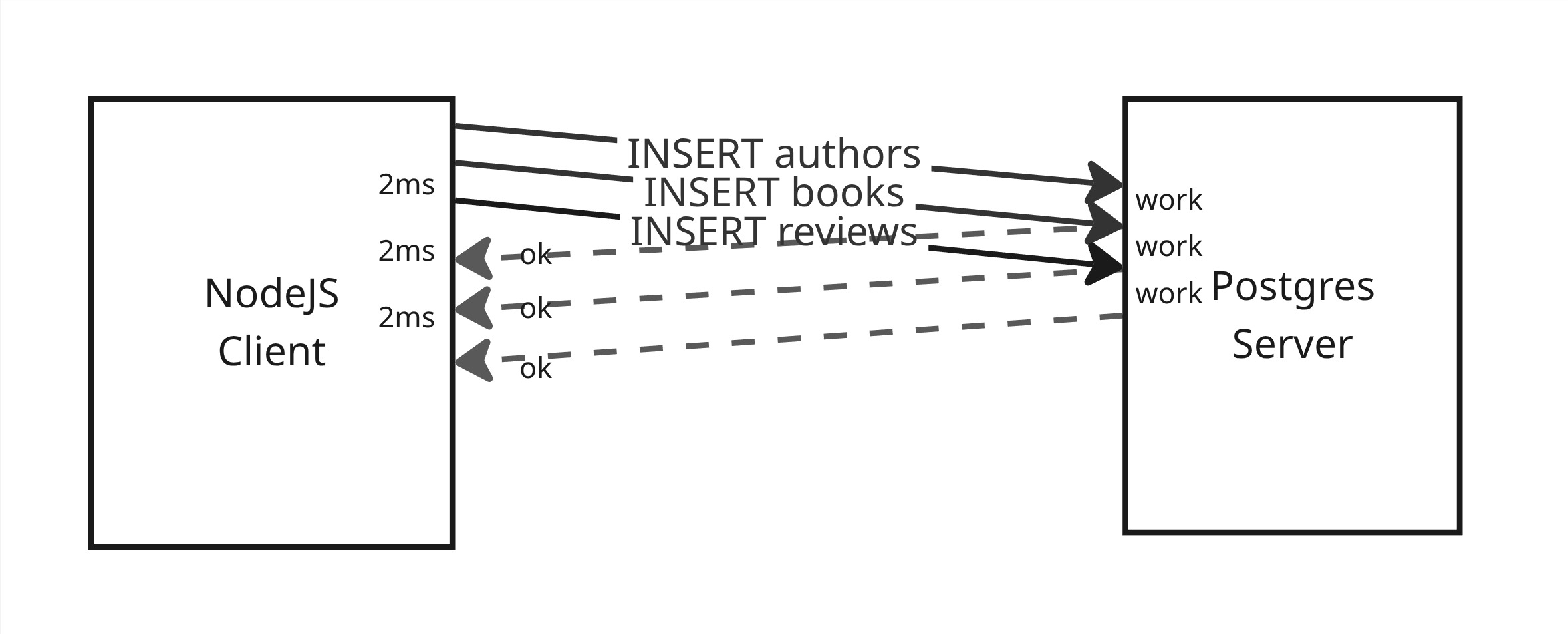

* Joist always batches SQL updates during writes (`em.flush`),

* Joist always prevents N+1s during reads (`em.load` & `em.find`),

* Joist always caches entities in an identity map,

* Joist always uses `unnest` to reduce query parameter explosion in large operations

None of these individual optimizations themselves are novel; but they’re each a little esoteric, each require remembering the best way to leverage them, and each might require restructuring your code/SQL queries a certain way pull—all decisions that engineers should not have to re-remember to do for every endpoint, for every workflow, for every piece of business logic.

Our optimizations focus on **reducing the total number of database queries** your code executes. So instead of 10 queries with 1-5ms of latency each, you only do 5 queries with the same 1-5ms latency each. Given that waiting on I/O is the bottleneck for most web applications, this can lead to significant performance improvements.

Joist is the best way for your application to leverage these techniques—by putting down the latest query builder dejure and letting us the handle (most of!) the queries for you.

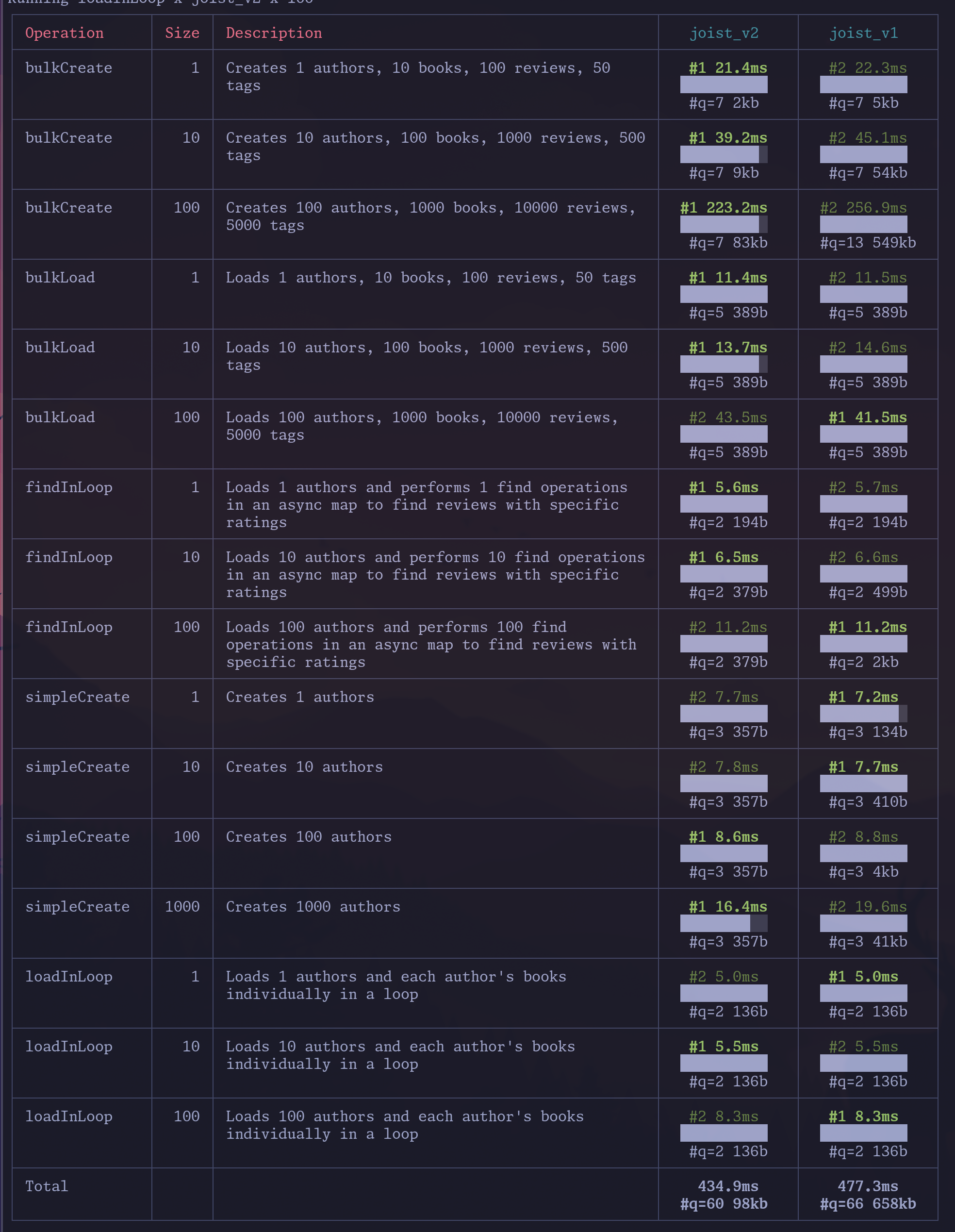

## Benchmarks

[Section titled “Benchmarks”](#benchmarks)

We also care about [benchmarks](https://github.com/joist-orm/joist-benchmarks) (and do surprisingly well on them!).

We say “surprisingly” somewhat in jest, because we care about performance—but we also care about maintainability. And testing. And having a codebase that doesn’t suck after five years of a rotating team of engineers working in it. 😅

We love to geek out on performance optimizations 🚀, but always while balancing the trade-offs in real-world codebases. In large, 20k or 500k or 1m LOC applications, engineers are either going to **forget** (at worst), or ideally **just not have to care about**, the optimizations that Joist performs all the time, every time.

If anything, the point of our benchmarks is not necessarily “we expect Joist to always be the fastest”, but rather given how much *other stuff* Joist provides you, with negligible overhead or even *net performance wins*, it should be a no-brainer to use Joist for your application.

# Derived Properties

> Documentation for Derived Properties

In Joist, Derived Properties are values that can be calculated/derived from other data within your domain model, for example:

* Deriving an Author’s `fullName` from their `firstName` and `lastName`

* Deriving an Author’s `numberOfBooks` from their `books` collection

Derived Properties **are not stored in the database**, but are calculated on-the-fly when accessed. Joist also supports [Reactive Fields](./reactive-fields), which are similar to Derived Properties but **are stored in the database**.

## Sync Properties

[Section titled “Sync Properties”](#sync-properties)

Synchronous properties calculate their value from other values immediately available on the same entity; because of this, they can always be accessed, and are just getters:

```ts

class Author {

get fullName(): string {

return this.firstName + (this.lastName ? ` ${this.lastName}` : "");

}

}

```

## Async Properties

[Section titled “Async Properties”](#async-properties)

Asynchronous Properties calculate their value from the entity and other related child/parent entities.

For example, to implement an `Author`’s `numberOfBooks` property that requires counting the Author’s `books` collection, use `hasProperty` with a populate hint stating it depends on the `books` collection:

```typescript

export class Author {

readonly numberOfBooks: Property = hasProperty(

// Declare the relations to load

"books",

// Only `a.books` will be marked as loaded

(a) => { a.books.get.length }

);

}

```

Because this calculation fundamentally requires having the `books` loaded, it is marked as `async` and requires loading with a populate hint to access:

```typescript

// Load an author without any populate hints

const a1 = await em.load(Author, "a:1");

// `.get` is not available, so `numberOfBooks` requires an await

const num1 = await a1.numberOfBooks.load();

// Load the author with `numberOfBooks` populated

const a2 = await em.load(Author, "a:1", "numberOfBooks");

// `.get` is now available and can be called immediately

const num2 = a2.numberOfBooks.get;

```

Like populate hints, `hasProperty`s can used nested hints:

```typescript

export class Author {

readonly latestComments: Property = hasProperty(

// Pass a nested load hint

{ publisher: "comments", comments: {} },

// `a` will have the deep relations loaded

(a) => [...(a.publisher.get?.comments.get ?? []), ...a.comments.get],

);

}

```

## Reactive Getters

[Section titled “Reactive Getters”](#reactive-getters)

If you want to access derived properties, like the `fullName` getter in the first example, from [Reactive Fields](./reactive-fields), Joist needs to know which specific fields `fullName` depends.

You can do this by using `hasReactiveGetter`, which declares the business logic’s dependencies:

```typescript

class Author {

readonly fullName: ReactiveGetter = hasReactiveGetter(

"fullName",

// Declare the other fields we depend on

["firstName", "lastName"],

// `a` will be limited to using only `firstName` and `lastName`

a => a.firstName + (a.lastName ? ` ${a.lastName}` : ""),

);

}

```

Now, even though `Author.fullName` itself is not stored in the database, if any **other** reactive values want to depend on `Author.fullName`, Joist will know when the `fullName` value becomes dirty, and those downstream values should be recalculated.

`ReactiveGetter`s are limited to depending on fields directly on the entity itself, which means they can be accessed at any time, without being loaded:

```typescript

// Load the author, without any populate hint

const a = await em.load(Author, "a:1");

// We can still call the fullName logic

console.log(a.fullName.get);

```

## Reactive Async Properties

[Section titled “Reactive Async Properties”](#reactive-async-properties)

Similar to Reactive Getters, if you have a [Reactive Field](./reactive-fields) that wants to depend on an Async Property, you need to declare the property’s **field-level** dependencies by using `hasReactiveProperty`:

```typescript

export class Author {

readonly numberOfBooks: Property =

hasReactiveProperty(

// Now this is a field-level reactive hint

{ books: "title" },

// `a` can only access fields declared by the hint

(a) => a.books.get.filter((b) => b.title !== undefined).length,

);

}

```

This is similar to regular `hasProperty`s, except that the hint declares the specific fields that the lambda uses, and the lambda will be restricted from using any field not declared in the hint.

# Enums

> Documentation for Enums

Joist supports enum tables for modeling fields that can be set to a fixed number of values (i.e. a `state` field that can be `OPEN` or `CLOSED`, or a field `status` field that can be `ACTIVE`, `DRAFT`, `PENDING`, etc.)

### What’s an Enum Table

[Section titled “What’s an Enum Table”](#whats-an-enum-table)

Enum tables are a pattern where each enum (`Color`) in your domain model has a corresponding table (`colors`) in the database, with rows for each enum values.

For example, for a `Color` enum with values of `Color.RED`, `Color.GREEN`, `Color.Blue`, the `color` table would look like:

```console

joist=> \d color;

Table "public.color"

Column | Type | Nullable | Default

--------+---------+----------+-----------------------------------

id | integer | not null | nextval('color_id_seq'::regclass)

code | text | not null |

name | text | not null |

Indexes:

"color_pkey" PRIMARY KEY, btree (id)

"color_unique_enum_code_constraint" UNIQUE CONSTRAINT, btree (code)

```

With rows for each value:

```console

joist=> select * from color;

id | code | name

----+-------+-------

1 | RED | Red

2 | GREEN | Green

3 | BLUE | Blue

(3 rows)

```

Which are codegen’d into TypeScript enums:

```typescript

export enum Color {

Red = "RED",

Green = "GREEN",

Blue = "BLUE",

}

```

And then other domain entities use foreign keys to point back to valid values:

```console

\d authors

Table "public.authors"

Column | Type | Nullable | Default

--------------------+--------------------------+----------+----------------------------------------

id | integer | not null | nextval('authors_id_seq'::regclass)

name | character varying(255) | not null |

favorite_color_id | integer | |

created_at | timestamp with time zone | not null |

updated_at | timestamp with time zone | not null |

Indexes:

"authors_pkey" PRIMARY KEY, btree (id)

"authors_favorite_color_id_index" btree (size_id)

Foreign-key constraints:

"authors_favorite_color_id_fkey" FOREIGN KEY

(favorite_color_id) REFERENCES color(id)

```

## Why Tables?

[Section titled “Why Tables?”](#why-tables)

There are multiple ways to model enums, i.e. other options are database-native enums (which Joist does support, see below), or using enum values declared solely within your codebase.

Joist generally recommended/refers the enum table pattern because:

* The foreign keys enforce data integrity at the database-level

(Database-native enums do this as well, codebase-only enums would not.)

* Ability to store `code` vs. `name`.

Although minor, it’s nice to have a dedicated `name` field to store the display name for enum values, and have them available in the database for updating/looking up.

* Ability to add extra columns (see later)

Joist supports adding addition columns to the code, so like `color.customization_cost` could be an additional column on the `color` table that Joist will automatically expose to the domain layer.

* Changing enum values is generally simpler DML instead of DDL

With a `color` table, adding/removing new values is just `INSERT`s / `UPDATE`s, whereas database-native enums require `ALTER`s to change the type.

## Enum Details and Extra Columns

[Section titled “Enum Details and Extra Columns”](#enum-details-and-extra-columns)

Besides the basic `Color` enum, Joist generates “details” types, i.e. `ColorDetails` that include more information about each enum:

```typescript

export type ColorDetails = { id: number; code: Color; name: string };

const details: Record = {

[Color.Red]: { id: 1, code: Color.Red, name: "Red" },

[Color.Green]: { id: 2, code: Color.Green, name: "Green" },

[Color.Blue]: { id: 3, code: Color.Blue, name: "Blue" },

};

```

Which you can lookup via static methods on the `ColorDetails` class:

```typescript

export const Colors = {

getByCode(code: Color): ColorDetails;

findByCode(code: string): ColorDetails | undefined;

findById(id: number): ColorDetails | undefined;

getValues(): ReadonlyArray;

getDetails(): ReadonlyArray;

};

```

Also, as mentioned before, if you add additional columns to the `color` table, they will be added to the `ColorDetails` type, i.e.:

```typescript

b.addColumn("color", { sort_order: { type: "integer", notNull: true, default: 1 } });

```

Will result in a `ColorDetails` that looks like:

```typescript

export type ColorDetails = {

id: number;

code: Color;

name: string;

sortOrder: 1 | 2 | 3;

};

```

Currently, “extra details columns” only supports primitive columns (integers, strings, etc.), i.e. not other enums, JSONB columns, or arrays.

## Integrated with Testing

[Section titled “Integrated with Testing”](#integrated-with-testing)

During tests, `flush_database` will skip enum tables, so they do not need to be re-populated each time.

## Enum Arrays

[Section titled “Enum Arrays”](#enum-arrays)

If you want to store a list of enums in a single column (for example, instead of just `Author.favoriteColor`, you want `Author.favoriteColors`), Joist supports modeling that as a `int[]` column, i.e.:

```console

joist=> \d authors;

Table "public.authors"

Column | Type | Nullable | Default

------------------+--------------------------+----------+-----------------------------------

--

id | integer | not null | nextval('authors_id_seq'::regclass

)

first_name | character varying(255) | not null |

favorite_colors | integer[] | | ARRAY[]::integer[]

created_at | timestamp with time zone | not null |

updated_at | timestamp with time zone | not null |

Indexes:

"authors_pkey" PRIMARY KEY, btree (id)

```

Note that Postgres does not yet support foreign key constraints on array columns, so you’ll lose that aspect of data integrity with enum arrays.

Also, because of this lack of foreign key constraint, Joist cannot use that to know “what enum type is this column?”

As an admittedly hacky approach, we encode that information in a schema comment:

```typescript

b.addColumns("authors", {

favorite_colors: {

type: "integer[]",

comment: `enum=color`,

notNull: false,

default: PgLiteral.create("array[]::integer[]"),

},

});

```

## When to Use Enums

[Section titled “When to Use Enums”](#when-to-use-enums)

In general, you should only use enums when you have business logic that directly branches based on the values.

For an example, if your system has a list of “markets”, and you only have \~2-3 markets, it can be tempting to think of `Market` as an enum, because currently there are only a few of them. And if you make it an enum, then `flush_database` will not reset the `market` table, so you don’t have to keep adding test data that is “we have markets 1/2/3”.

However, now adding/removing new markets changes the `Market` enum, and so has to be coordinated with deployments. And renaming/removing `Market`s is a breaking change.

So, unless if you have codepaths that are explicitly dedicated to `Market 1` codepath is “chunk of business logic” and `Market 2` codepath is “different chunk of business logic”, these “small lookup tables” are generally better modeled as just regular entities.

## Native Enums

[Section titled “Native Enums”](#native-enums)

While Joist generally prefers enum tables, if you have native enums in your schema, Joist will work for those as well.

Note that you don’t get enum details, or extra columns, but the basic out of “a TypeScript” enum and `Author.favoriteColor` is typed as the `Color` enum will work.

# Fields

> Documentation for Fields

Fields are the primitive columns in your domain model, so all of the (non-foreign key) `int`, `varchar`, `datetime`, etc. columns.

For these columns, Joist automatically adds getters & setters to your domain model, i.e. an `authors.first_name` column will have getters & setters added to `AuthorCodegen.ts`:

```ts

// This code is auto-generated

class AuthorCodegen {

get firstName(): string {

return getField(this, "firstName");

}

set firstName(firstName: string) {

setField(this, "firstName", firstName);

}

}

```

## Optional vs Required

[Section titled “Optional vs Required”](#optional-vs-required)

Joist’s fields model `null` and `not null` appropriately, e.g. for a table like:

```plaintext

Table "public.authors"

Column | Type | Nullable

--------------+--------------------------+----------+

id | integer | not null |

first_name | character varying(255) | not null |

last_name | character varying(255) | |

```

The `Author` domain object will type `firstName` as a `string`, and `lastName` as `string | undefined`:

```typescript

class AuthorCodegen {

get firstName(): string { ... }

set firstName(firstName: string) { ... }

get lastName(): string | undefined { ... }

set lastName(lastName: string | undefined) { ... }

}

```

### Using `undefined` instead of `null`

[Section titled “Using undefined instead of null”](#using-undefined-instead-of-null)

Joist uses `undefined` to represent nullable columns, i.e. in the `Author` example, the `lastName` type is `string | undefined` instead of `string | null` or `string | null | undefined`.

The rationale for this is simplicity, and Joist’s preference for “idiomatic TypeScript”, which for the most part has eschewed the “when to use `undefined` vs. `null` in JavaScript?” decision by going with “just use `undefined`.”

### String Trimming and Coercion

[Section titled “String Trimming and Coercion”](#string-trimming-and-coercion)

Joist applies reasonable/opinionated defaults to handling string values, specifically:

* Leading/trailing spaces are trimmed

* Empty string `""` is replaced with `undefined` (becomes `null` in the db)

This is to avoid “silly mistakes” like a `first_name=""` or `first_name=' bob'` getting into the database, and throwing off business logic, i.e. that might otherwise have detected `first_name=bob` as a duplicate (but missed `" bob"`), or `first_name=""` as a missing required field.

If you want to disable this behavior, setting `DEFAULT=''` on the database column will give Joist the hint that, for this column, it’s actually desired to let the empty string value be saved to the database, so we will keep empty strings, and also disable the leading/trailing space trimming.

If you need finer-grained control over this behavior, it could be configurable via the `joist-config.json` file, we just have not implemented that yet.

### Type Checked Construction

[Section titled “Type Checked Construction”](#type-checked-construction)

The non-null `Author.firstName` field is enforced as required on construction:

```typescript

// Valid

em.create(Author, { firstName: "bob" });

// Not valid

em.create(Author, {});

// Not valid

em.create(Author, { firstName: null });

// Not valid

em.create(Author, { firstName: undefined });

```

And for updates made via the `set` method:

```typescript

// Valid

author.set({ firstName: "bob" });

// Valid, because `set` accepts a Partial

author.set({});

// Not valid

author.set({ firstName: null });

// Technically valid b/c `set` accepts a Partial, but is a noop

author.set({ firstName: undefined });

```

### Partial Updates Semantics

[Section titled “Partial Updates Semantics”](#partial-updates-semantics)

While within internal business logic `null` vs. `undefined` is not really a useful distinction, when building APIs `null` can be a useful value to signify “unset” (vs. `undefined` which typically signifies “don’t change”).

For this use case, domain objects have a `.setPartial` that accepts null versions of properties:

```typescript

// Partial update from an API operation

const updateFromApi = {

firstName: null

};

// Allowed

author.setPartial(updateFromApi);

// Outputs "undeifned" b/c null is still translated to undefined

console.log(author.firstName);

```

Note that, when using `setPartial` we have caused our `Author.firstName: string` getter to now be incorrect, i.e. for a currently invalid `Author`, clients might observe `firstName` as `undefined`.

See [Partial Update APIs](/features/partial-update-apis) for more details.

## Protected Fields

[Section titled “Protected Fields”](#protected-fields)

You can mark a field as protected in `joist-config.json`, which will make the setter `protected`, so that only your entity’s internal business logic can call it.

The getter will still be public.

```json

{

"entities": {

"Author": {

"fields": {

"wasEverPopular": { "protected": true }

}

}

}

}

```

## Field Defaults

[Section titled “Field Defaults”](#field-defaults)

### Schema Defaults

[Section titled “Schema Defaults”](#schema-defaults)

If your database schema has default values for columns, i.e. an integer that defaults to 0, Joist will immediately apply those defaults to entities as they’re created, i.e. via `em.create`.

This gives your business logic immediate access to the default value that would be applied by the database, but without waiting for an `em.flush` to happen.

### Dynamic Defaults

[Section titled “Dynamic Defaults”](#dynamic-defaults)

If you need to use `async`, cross-entity business logic to set field defaults, you can use the `config.setDefault` method:

```typescript

/** Example of a synchronous default. */

config.setDefault("notes", (b) => `Notes for ${b.title}`);

/** Example of an asynchronous default. */

config.setDefault("order", { author: "books" }, (b) => b.author.get.books.get.length);

```

Any `setDefault` without a load hint (the 1st example) must be synchronous, and will be *applied immediately* upon creation, i.e. `em.create` calls, just like the schema default values.

Any `setDefault` with a load hint (the 2nd exmaple) can be asynchronous, and will *not be applied until `em.flush()`*, because the `async` nature means we have to wait to invoke them.

Info

We could probably add an async `em.assignDefaults`, similar to `em.assignNewIds`, to allow code to trigger async default assignment, without kicking off an `em.flush`.

### Hooks

[Section titled “Hooks”](#hooks)

You can also use `beforeCreate` hooks to apply defaults, but `setDefault` is preferred because it’s the most accurate modeling of intent, and follows our general recommendation to use hooks sparingly.

# JSONB Fields

> Documentation for JSONB Fields

Postgres has rich support for [storing JSON](https://www.postgresql.org/docs/current/datatype-json.html), which Joist supports.

### Optional Strong Typing

[Section titled “Optional Strong Typing”](#optional-strong-typing)

While Postgres does not apply a schema to `jsonb` columns, this can often be useful when you do actually have/know a schema for a `jsonb` column, but are using the `jsonb` column as a more succinct/pragmatic way to store nested/hierarchical data than as strictly relational tables and columns.

To support this, Joist supports both the [superstruct](https://docs.superstructjs.org/) library and [Zod](https://zod.dev/), which can describe both the TypeScript type for a value (i.e. `Address` has both as a `street` and a `city`), as well as do runtime validation and parsing of address values.

That said, if you do want to use the `jsonb` column effectively as an `any` object, the additional typing is optional, and you’ll just work with `Object`s instead.

### Approach

[Section titled “Approach”](#approach)

We’ll use an example of storing an `Address` with `street` and `city` fields within a single `jsonb` column.

#### Zod

[Section titled “Zod”](#zod)

First, define a [Zod](https://zod.dev/) schema for the data you’re going to store in `src/entities/types.ts`:

```typescript

import { z } from "zod";

export const Address = z.object({

street: z.string(),

city: z.string(),

});

```

Then tell Joist to use this `Address` schema for the `Author.address` field in `joist-config.json`:

```json

{

"entities": {

"Author": {

"fields": {

"address": {

"zodSchema": "Address@src/entities/types"

}

},

"tag": "a"

}

}

}

```

Now just run `joist-codegen` and the `AuthorCodegen`’s `address` field use the `Address` schema using Zod’s `z.input` and `z.output` inference in setter and getter respectively.

#### Superstruct

[Section titled “Superstruct”](#superstruct)

First, define a [superstruct](https://docs.superstructjs.org/) type for the data you’re going to store in `src/entities/types.ts`:

```typescript

import { Infer, object, string } from "superstruct";

export type Address = Infer;

export const address = object({

street: string(),

city: string(),

});

```

Where:

* `address` is a structure that defines the schema/shape of the data to store

* `Address` is the TypeScript type system that Superstruct will derive for us

Then tell Joist to use this `Address` type for the `Author.address` field in `joist-config.json`:

```json

{

"entities": {

"Author": {

"fields": {

"address": {

"superstruct": "address@src/entities/types"

}

},

"tag": "a"

}

}

}

```

Note that we’re pointing Joist at the `address` const.

Now just run `joist-codegen` and the `AuthorCodegen`’s `address` field use the `Address` type.

### Current Limitations

[Section titled “Current Limitations”](#current-limitations)

There are few limitations to Joist’s current `jsonb` support:

* Joist currently doesn’t support querying / filtering against `jsonb` columns, i.e. in `EntityManager.find` clauses.

In theory this is doable, but just hasn’t been implemented yet; Postgres supports quite a few operations on `jsonb` columns, so it might be somewhat involved. See [jsonb filtering support](https://github.com/joist-orm/joist-orm/issues/230).

Instead, for now, can you use raw SQL/knex queries and use `EntityManager.loadFromQuery` to turn the low-level `authors` rows into `Author` entities.

* Joist currently loads all columns for a row (i.e. `SELECT * FROM authors WHERE id IN (...)`), so if you have particularly large `jsonb` values in an entity’s row, then any load of that entity will also return the `jsonb` data.

Eventually [lazy column support](https://github.com/joist-orm/joist-orm/issues/178) should resolve this, and allow marking `jsonb` columns as lazy, such that they would not be automatically fetched with an entity unless explicitly requested as a load hint.

# Lifecycle Hooks

> Documentation for Lifecycle Hooks

Joist supports hooks that can run business logic at varies stages in an entity’s lifecycle, for example to implement business logic like “when an `Author` entity is updated, always do x/y/z”.

Hooks are not immediately ran on `em.create` or entity modifications, and only run as part of `em.flush()` because `em.flush()` is an async method, and this allows hooks to themselves have async behavior, i.e. load additional entities from the database.

### Setup

[Section titled “Setup”](#setup)

All hooks are set up by the entity’s `config` API:

```typescript

import { authorConfig as config } from "./entities";

export class Author extends AuthorCodegen {}

// Create a draft book for all authors

config.beforeCreate("books", (a, { em }) => {

if (a.books.get.length === 0) {

em.create(Book, { author: a, status: BookStatus.Draft });

}

});

```

Info

At first, it seems odd that Joist’s hooks are not methods on the class itself, as this would be a more traditional place for ORM-driven business logic.

However, being added via the `config` API has a few benefits:

1. The hook methods all take load hints, i.e. `"books"` in the above `beforeCreate` example, which makes the `a` param typed as `Loaded` instead of `Author`.

This allows the hook’s business logic to be written with as few `await`s as possible, such that ideally the lambda itself can be synchronous (although you can make it `async` if necessary).

If `beforeCreate` was written as a method, then an additional local variable (similar to `a`) would need to be created, as `this` is not aware of the hook’s load hint.

2. It’s easier to keep business logic small & decoupled, because if you have multiple operations to perform on `beforeCreate`, you can have two entirely separate hooks, each with separate load hints and their own lambdas.

If `beforeCreate` was a single `Author.beforeCreate` method, then its implementation would just get bigger and more complex as it handles additional business requirements.

3. It’s trivial to reuse hook logic across entities without relying on multiple inheritance.

For example, we could have a method like `addSoftDeleteHooks(config)` that, for any given entity’s config, adds some shared business logic to the entity.

### Available Hooks

[Section titled “Available Hooks”](#available-hooks)

Joist supports the following hooks, listed in the order that they are fired during `em.flush`:

* `beforeCreate` fired when an entity is created / `INSERT`-d for the first time

* `beforeUpdate` fired when an entity is updated / `UPDATE`-d

* `beforeFlush` fired when an entity is either created or updated (but not deleted)

* `beforeDelete` fired when an entity is deleted / `DELETE`-d

* `afterValidation` fired after an entity is created or updated, and all validation rules have passed

* `beforeCommit` fired when an entity is created, or updated, or deleted and the transaction is about to commit, can abort the transaction by throwing an error

* `afterCommit` fired when an entity is created, or updated, or deleted and the transaction has committed

### Allowed Behavior

[Section titled “Allowed Behavior”](#allowed-behavior)

`beforeCreate`, `beforeUpdate`, `beforeFlush`, and `beforeDelete` hooks are allowed to create/update/delete other entities.

For example, a new `Author` can use a `beforeCreate` hook to automatically `em.create` the author’s first/default `Book`. Or a deleted `Author` could `em.delete` its `Book`s in an `Author.beforeDelete` hook (Joist also has a dedicated `config.cascadeDelete` API, but `beforeDelete` can handle more custom behavior).

Any entities that are created/updated/deleted by a hook will themselves have their appropriate hooks ran, although only if those entity’s hooks have not already been run (to avoid cycles of a book-touches-author/author-touches-book infinitely recursing).

`afterValidation`, `beforeCommit`, and `afterCommit` are not allowed to mutate entities.

#### Wire Calls

[Section titled “Wire Calls”](#wire-calls)

Making RPC calls to 3rd party systems can be problematic, and so we recommend:

* Do not make RPC calls from any non-`afterCommit` hook.

It is very likely that hooks (like `beforeFlush`) will run, but then your `em.flush` later fails due to validation rules, at which point your transaction/changes won’t be committed, and you’ve likely made an unnecessary/incorrect wire call.

* Only pragmatically make wire calls in the `afterCommit` hook.

While `afterCommit` is the “safest” place to make a wire call, because it’s only called after the transaction has been committed, there is still a chance that either a) `em.flush` commits but the machine crashes before running `afterCommit`, or b) your `afterCommit` fails but now will not retry.

Because of these wrinkles, our best advice is to use the [job drain](https://brandur.org/job-drain) pattern, and use a `beforeCommit` hook to transactionally enqueue jobs in your primary database.

The `beforeCommit` hook runs after entities have been `INSERT`d or `UPDATE`d, and so will have access to entity ids, which can be used for background job parameters/payloads.

These background jobs create “intentions of work to be done”, and since the job is atomically saved to the database in the same transaction as your business logic writes (for example inserting a `sendOnboardingEmail` job into the `jobs` table and `INSERT`ing a new `authors` row), they are both guaranteed to complete or not-complete. And then the background job runner can separately invoke (and retry if necessary) the intended action of calling/syncing with the 3rd party system.

### Hooks vs. Validation Rules

[Section titled “Hooks vs. Validation Rules”](#hooks-vs-validation-rules)

Hooks run before validation rules, and are allowed to mutate entities that may currently be invalid.

Validation rules run after hooks, and are not allowed to mutate entities: they must be side effect free.

For example, you could have a validation rule of “Author must have at least one book”, and a hook that “creates a default book for new authors”, and when you do `em.create(Author)` without any books, then first the hook would run and create a single book, such that when the validation rule runs, it passes.

Similarly, hooks can set required fields before the missing values trigger validation rules.

Validation rules are only ran once per `em.flush`, and only after all hooks, and all transitively-ran hooks, have finished.

Info

The term “transitively-ran” hooks describes the scenario of:

* An endpoint/user code creates 5 new `Author` entities and calls `em.flush`

* `em.flush` “runs hooks” (`beforeCreate` and `beforeFlush`) for all 5 new `Author`s entities

* Each `Author`’s `beforeCreate` hook creates a new draft `Book` entity

* `em.flush` notices the newly-created `Book` entities, and so “runs hooks again”, but only against the 5 `Book` entities

So, this process is transitive as mutating the initial set of entities may cause, via custom logic in hooks, a subsequent set of entities to be mutated, which themselves might cause an additional set of entities to be mutated, until the process “settles”.

Note that because `em.flush` marks which entities have had hooks ran, and will not invoke hooks twice on a given entity, this process is guaranteed to finish, i.e. there is not a risk of infinite loops between hooks.

## afterMetadata

[Section titled “afterMetadata”](#aftermetadata)

`afterMetadata` is an additional hook that is not associated with an entity’s lifecycle, but instead called once during the boot process.

This can be useful if you want to set up hooks for multiple entities, but need to make sure all entity constructors have been defined (which happens incrementally during the `import` / `require` process).

For example, if you’re using polymorphic references and want to setup a hook for each entity in the union:

```typescript

/** Add rules to each of our polymorphic entities. */

config.afterMetadata(() => {

getParentConstructors().forEach((cstr) => {

// Get each entity's config and add a hook

getMetadata(cstr).config.beforeCreate((e) => {});

});

});

```

# Reactions

> Documentation for Reactions

Reactions are a powerful feature that sits between [Lifecycle Hooks](./lifecycle-hooks) and [Reactive Fields](./reactive-fields), allowing you to run custom business logic whenever specific fields or relations change during flush or recalc.

## Differences from other features

[Section titled “Differences from other features”](#differences-from-other-features)

Reactions differ from Reactive Fields in that they:

* **Can make arbitrary changes** to any entity

* **Receives a `Loaded`** as its first parameter rather than a `Reacted` allowing arbitrary access

Reactions differ from Lifecycle Hooks in that they:

* **Only run when their hint changes**, not on every flush

* **Can run when the entity has no direct changes**, such as when a related entity changes

* **Can run multiple times per flush** as the reactivity graph settles

* **Takes a [reactive hint](./reactive-fields/#always-up-to-date)** rather than a simpler load hint

Comparison table with Hooks and Reactive Fields:

| Feature | Hooks | Reactions | Reactive Fields / References |

| ----------------------------- | ----- | --------- | ---------------------------- |